FastRouter vs. OpenRouter

Both put a single API in front of every major LLM provider. Past that, the products diverge — on cost, routing depth, evaluations, and the governance tooling that decides whether you can still use either one at $100K/month in spend.

Disclosure. Published by FastRouter. We've tried to keep this honest — there are sections below where OpenRouter is the better pick. Spot something inaccurate? Email us and we'll fix it.

Same shape, different ceiling.

OpenRouter is the easiest way to try every model on the market through one API key. The catalog is huge, the docs are clean, and you can be productive in under 10 minutes. For prototyping, side projects, and personal tools, it's hard to beat.

FastRouter is built for the moment your LLM bill becomes a real line item. Zero markup on inference, 7 routing strategies (including category-based and AI Auto Router), production evaluations, GEPA prompt optimization, and shared workspace budgets that actually act as kill-switches. The trade-off: less of a "browse-every-model" surface, more of an operational gateway.

If your traffic is real and growing, the 5.5% credit fee plus the gaps in evals, prompt optimization, and shared budget enforcement add up faster than you'd think. If you're tinkering, OpenRouter is fine. The decision tree is at the bottom.

The four numbers that usually decide it.

1) Markup on inference

FastRouter: 0% with BYOK

OpenRouter: 5.5% on credits

2) Routing strategies

FastRouter: 7 (incl. AI Auto)

OpenRouter: 2 (price/latency)

3) E2E latency P50

FastRouter: 3.23s

OpenRouter: 5.23s

4) Production evals

FastRouter: Smart + Auto + GEPA

OpenRouter: None built in

Feature matrix

Where the two products genuinely differ, ranked roughly by how much it tends to matter at production scale. ✓ = supported, ✗ = not supported, ◑ = partial.

Capability | FastRouter | OpenRouter |

|---|---|---|

Markup on API calls | 0% with BYOK | 5.5% on credit purchases (5% on crypto) |

Model catalog | Unified catalog across major frontier & open providers | 290+ models, 60+ inference providers |

Routing strategies | 7: category, priority, lowest latency, lowest price, highest throughput, weighted, AI Auto | 2: |

AI Auto Model Router | ✓ Picks model per request from cost/latency/quality signals | ✗ |

Category-based routing | ✓ Map prompt classes to model groups | ✗ |

Smart Evaluations on production traffic | ✓ AI quality scoring on live calls | ✗ |

Automatic Evaluations | ✓ Background sampling & benchmarking | ✗ |

GEPA prompt optimization | ✓ Proprietary evolutionary optimizer | ✗ |

Video evaluations | ✓ Compare models on video inputs | ✗ |

MCP credential vaulting | ✓ Agents never see raw provider keys | ✗ |

Workspaces / teams | ✓ Shared workspace budgets that hard-stop | ◑ Workspaces with Admin/Member roles, spend caps per-user / per-key |

Shared project-level budget caps | ✓ Enforced at workspace level | ✗ Per-user / per-key only |

7-day passive audit on existing traffic | ✓ No code changes | ✗ |

OpenAI-compatible endpoint | ✓ | ✓ |

OAuth / PKCE for end-user keys | ◑ Programmatic provisioning | ✓ Public PKCE flow for consumer apps |

Embeddings API | ✓ | ✓ |

OpenTelemetry export | ✓ | ✓ Broadcast to Grafana, SigNoz, Langfuse, etc. |

Free tier | 7-day audit + free dev tier | 25+ free models, no credit card |

Hosting / data residency | Multi-region, ZDR by default | EU region locking on Enterprise plan |

Reading this matrix fairly

OpenRouter ships a working PKCE OAuth flow that's actually a great fit for consumer apps where each end user authenticates with their own OpenRouter account. FastRouter's auth model assumes you own the keys and provision programmatically. If your product needs end-user-attached keys, that's a real OpenRouter advantage.

Where the two products diverge most

OpenRouter's routing is fundamentally a sort plus a fallback list. You either sort: "price" for the lowest-cost provider serving a model, or sort: "latency" for the fastest. You can declare a fallback chain (model A, then B, then C) and OpenRouter de-prioritizes providers that errored in the last 30 seconds. That's the model.

FastRouter exposes seven strategies as first-class primitives that compose. Some operate at the provider layer (lowest price across providers serving a model, lowest latency, highest throughput, weighted shuffle for traffic splitting, priority chains for strict failover order). Some operate at the model layer (category-based routing maps prompt classes to model groups; the AI Auto Model Router picks the model per request from real-time cost, latency, and quality signals). The two layers compose — you can pick the right model for the request and pick the right provider for that model in the same call.

Both products can fail over on errors and rank providers. The depth difference is whether the gateway also reasons about which model to use in the first place, or whether your application has to decide that ahead of time.

OpenRouter routes between providers for the model you picked. FastRouter does that too — and can also pick the model itself based on the request.

The capability OpenRouter doesn't ship at all

OpenRouter has built a clean observability layer — usage breakdowns, model and key-level analytics, optional input/output logging (with a 1% discount as the carrot), and OpenTelemetry broadcast to external observability stacks. What it does not ship is anything that closes the loop: no eval suite, no prompt optimization, no automated quality scoring on production traffic.

FastRouter ships three:

- Smart Evaluations — AI-powered quality scoring on live calls. Surfaces the model that's actually delivering the best output for your use case without you sampling manually.

- Automatic Evaluations — background sampler that benchmarks competing models against each other on a slice of your real traffic. Optimization opportunities surface on their own.

- GEPA — Generative Evolutionary Prompt Architecture iterates across prompt variations and model combinations to find a Pareto-optimal prompt for your workload.

If you'd rather use Langfuse, Braintrust, or a homegrown eval pipeline, OpenRouter's Broadcast feature makes the wiring painless. The trade-off is that you're now operating two platforms instead of one, and the eval signals don't influence routing decisions automatically.

The 5.5% problem.

OpenRouter charges 5.5% on credit card top-ups (5.0% on crypto). It is not a per-token markup — it's a percentage of every dollar loaded into the platform. There's no inference-margin charge on top of that, which is a fair model for prototyping. BYOK is supported with 1M free requests per month and a 5% fee on overage if you bring your own provider keys.

FastRouter charges 0% markup on API calls when you BYOK. The platform fee structure is a flat managed-service fee instead of a percentage of spend, which means the gateway tax doesn't compound as your usage grows.

The math at three scales:

- $2K/month inference spend. OpenRouter ≈ $110/month in fees. FastRouter ≈ $0 in markup. The gap rounds to lunch money — neither is the wrong choice.

- $10K/month. OpenRouter ≈ $550/month, ~$6,600/year. The gap starts to be noticeable; it's roughly the cost of a single mid-sized eval cycle.

- $100K/month. OpenRouter ≈ $5,500/month, ~$66,000/year — before any inference is run. The gap is now a hire, or six months of an eval platform.

Read this if you're on OpenRouter today

The 5.5% fee is on credit purchases, not consumption — so unused credits don't refund the fee. Teams that pre-load larger top-ups to reduce Stripe fees end up locked in to the gateway tax even on dollars they haven't spent yet.

Workspace budgets that actually act as kill-switches

OpenRouter shipped Workspaces in 2025 with Admin and Member roles, per-workspace API keys, model and provider allowlists, and per-user / per-API-key spend caps. That's a meaningful baseline — and it's enough for a lot of teams. What it doesn't have is a shared, project-level budget pool that hard-stops the workspace. Caps apply to the user or the key, not the workspace as a single budget. If you have ten developers each with a $200/month cap, your workspace ceiling is $2,000 — even if you wanted the workspace ceiling to be $1,000 split however the team uses it.

FastRouter's governance model treats the workspace itself as the budget unit. Caps act as kill-switches at the workspace boundary, not just the key. MCP credential vaulting means agents and tool callers never see raw provider keys — they call FastRouter, which injects credentials server-side. For teams running agentic workloads, that closes a real exfiltration surface.

On Zero Data Retention, both are reasonable. OpenRouter is metadata-only by default and ships a per-workspace ZDR enforcement that routes only to ZDR-compliant endpoints. FastRouter is ZDR by default. Neither stores prompts or completions unless you opt in.

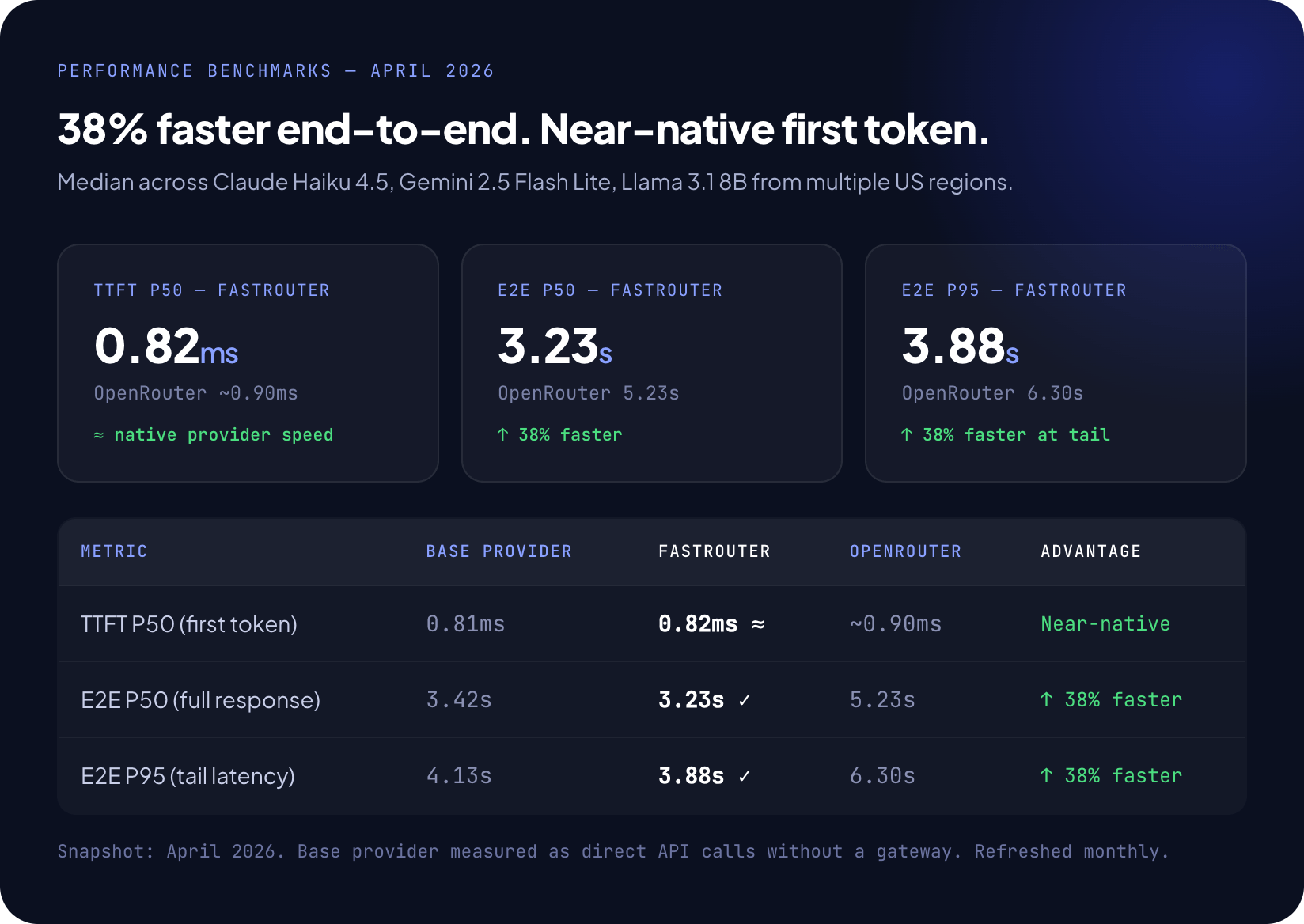

Measured, not claimed

We benchmark monthly across Claude Haiku 4.5, Gemini 2.5 Flash Lite, and Llama 3.1 8B from multiple US regions. Time-to-first-token (TTFT) is essentially identical between the two — both gateways are thin enough at the connection layer that the model's own first-token latency dominates. End-to-end is where the gap opens up.

Fastrouter vs OpenRouter

When OpenRouter is the right call

You're prototyping or model-shopping

- One key, every model, instant access

- 25+ free models with no credit card

- Best discovery surface in the category

You're building a consumer app with end-user keys

- PKCE OAuth flow is mature and battle-tested

- Each end user authenticates with their own account

- You don't carry inference cost yourself

Solo developer or low-volume project

- The 5.5% fee is rounding error at small scale

- No managed-platform overhead to justify

- Setup is genuinely under 10 minutes

You want maximum model breadth, not depth

- 290+ models, 60+ inference providers

- Niche or experimental models often appear here first

- Plugins for web search, PDF parsing, response healing

When FastRouter is the right call

Your monthly inference bill has crossed five figures

- 0% markup vs 5.5% on credit purchases

- Smart routing typically delivers 40–60% cost reduction

- The gateway tax stops compounding with growth

You need shared workspace budgets, not per-user caps

- Workspace-level kill-switches, not just per-key

- RBAC that engineering, product, and finance can all use

- Hard limits, not just alerts

You want evals and routing in one product

- Smart + Automatic Evaluations on live traffic

- GEPA prompt optimization runs continuously

- Eval signals feed routing decisions

You're running agentic workloads with MCP

- MCP credential vaulting — agents never see raw keys

- Per-tool budget caps and rate limits

- Audit trail across multi-step tool calls

Pick the right tool for your situation

1) IF -> You're under $2K/month in spend and still figuring out which models you need

Stay on OpenRouter. The fee is rounding error at this scale and the catalog breadth helps you decide.

2) IF -> You're between $2K and $10K/month and growing

Run the FastRouter 7-day audit in passive mode. No code changes. You'll see the routing efficiency and the projected savings before you commit.

3) IF -> You're over $10K/month or operating multiple workloads with different SLAs

Move to FastRouter. The 5.5% fee, governance gaps, and absent eval layer all start hurting at this point. Migration is straightforward — both are OpenAI-compatible.

4) IF -> You're shipping a consumer app where end users bring their own keys

Use OpenRouter. The PKCE OAuth flow is exactly what you need and FastRouter isn't built for that pattern.

5) IF -> You need shared budget enforcement, evals, and MCP credential vaulting in one platform

Use FastRouter. There's no equivalent on OpenRouter today.

Common questions

1) Can I migrate from OpenRouter to FastRouter without rewriting my code?

Yes. Both expose an OpenAI-compatible /v1/chat/completions endpoint. Migration is typically a base URL change and a key swap. Model IDs are aliased so existing requests don't break, and the FastRouter team will sit through the cutover with you for production workloads.

2) Is the 38% latency advantage real for my workload?

It depends on your model mix and request size. The benchmark above runs across three models from US regions. The 7-day audit will run real numbers on your actual traffic, so you don't have to take ours as gospel. TTFT is essentially identical between the two — the gap is in end-to-end, which is sensitive to retry behavior and provider selection.

3) OpenRouter has 290+ models. Does FastRouter cover the same breadth?

FastRouter covers every major frontier provider and the most-used open-weight providers, but the long-tail catalog is smaller than OpenRouter's. If you specifically need an obscure or experimental model that only one provider hosts, OpenRouter may be the better discovery surface. If you need the top 30 models that drive 95% of production traffic, both cover them.

4) Does FastRouter support BYOK?

Yes. BYOK is the default model — you bring your provider keys and FastRouter charges nothing on top of inference. OpenRouter also supports BYOK with 1M free requests per month and a 5% fee on overage.

5) What about end-user OAuth like OpenRouter's PKCE flow?

FastRouter supports programmatic key provisioning, but does not ship a consumer-grade PKCE OAuth flow today. If your application needs each end user to authenticate against the gateway with their own account and bring their own credit balance, OpenRouter is purpose-built for that pattern and FastRouter isn't.

6) How does the 7-day audit work?

You point a fraction of your existing traffic — or a mirror of it — at FastRouter for seven days. No code changes are required beyond the base URL. At the end of the week you get a cost breakdown, a routing-efficiency report, and a projected savings number you can share with finance. If the numbers don't justify the move, you keep the report and walk away.

7) Can I use Langfuse, Braintrust, or another eval platform with both?

Yes for both. OpenRouter's Broadcast feature exports OpenTelemetry traces to most major observability backends. FastRouter exports OTel traces too — but also ships its own Smart and Automatic Evaluations, plus GEPA prompt optimization, so a third-party eval platform may be redundant.

See the difference on your own traffic

Run the FastRouter audit against your OpenRouter usage

Seven days. Passive. Zero code changes. We'll send back a routing-efficiency report and a projected cost delta you can show finance.

Related Articles

FastRouter vs. Helicone

Helicone built one of the cleanest LLM observability products in the category. Mintlify acquired it in March 2026 and the team has been clear: maintenance mode, no new features. Here's what to do if you're still on it.

FastRouter vs. Langfuse

FastRouter is a gateway. Langfuse is an observability and eval platform. They're not really competing — they're often used together. This page is here to make that decision sharp instead of confusing.

FastRouter vs. Requesty

Both put a single API in front of every major LLM provider. Past that, the products diverge — on cost, routing depth, evaluations, and the governance tooling that decides whether you can still use either one at $100K/month in spend.